Monday, May 22, 2024

Engineering standards are not enough when suppliers read them differently

by

Published

Views:

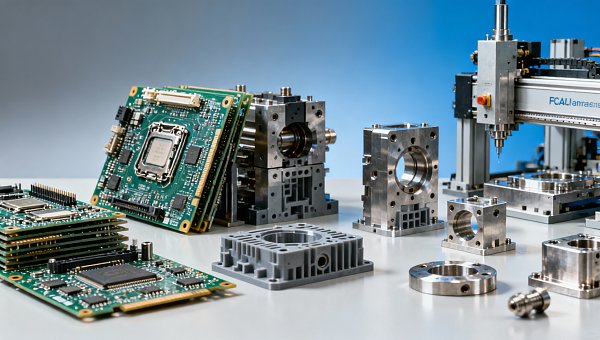

Engineering standards should align global manufacturing, yet supplier interpretation often varies across PCBA manufacturing, tech hardware, and tooling solutions. In modern manufacturing, this gap can trigger quality drift, cost overruns, and compliance risks—from plastic injection mold factory workflows to industrial sustainability, crop monitoring, and industrial infrastructure projects. This article explores why Engineering standards alone are not enough and how clearer benchmarking reduces risk across global manufacturing.

For procurement teams, engineering reviewers, project managers, quality leaders, and financial approvers, the issue is rarely the absence of a standard. The real problem is that two suppliers can reference the same ISO, IPC, or IATF clause and still build, inspect, package, and validate in different ways. In cross-border manufacturing, that interpretation gap often surfaces only after tooling launch, pilot production, field complaints, or delayed compliance review.

This matters across sectors. A PCB stack-up tolerance that looks acceptable on paper may fail thermal cycling in automotive electronics. A plastic injection mold factory may quote to the same drawing but apply different gate design assumptions, steel grades, or process capability targets. In sustainable agriculture and environmental infrastructure, equipment suppliers may follow the same reference framework yet diverge on test duration, contamination thresholds, or maintenance intervals.

That is why technical benchmarking has become a strategic layer between written engineering standards and real-world supplier execution. Platforms such as Global Industrial Matrix (GIM) help companies compare not only declared compliance, but also process discipline, inspection logic, digital traceability, and cross-sector performance expectations before risk becomes cost.

Why the Same Standard Produces Different Supplier Outcomes

Engineering standards define intent, minimum criteria, and accepted methods, but they do not eliminate interpretation. A standard may specify dimensional tolerance, cleanliness level, solderability criteria, or documentation control, yet it often leaves room for process setup, sampling method, inspection frequency, and escalation thresholds. That flexibility is useful in engineering, but it also creates variation when suppliers differ in capability, maturity, or internal quality culture.

In practical sourcing, three suppliers may all claim alignment to the same requirement and still operate at different levels. One may inspect first article samples at 100% critical dimensions, another may sample 5 pieces per cavity, and a third may rely mainly on operator checks. On paper, all may appear compliant. In production, the difference can translate into a scrap gap of 2% to 8%, a launch delay of 1 to 3 weeks, or repeated corrective action cycles.

Where interpretation gaps usually appear

The highest-risk areas are rarely the obvious ones. Problems often emerge in test limits, material substitutions, tooling maintenance windows, process capability assumptions, and the definition of acceptable cosmetic defects. In electronics, IPC acceptance criteria may be read differently depending on end use. In automotive and mobility, PPAP readiness may vary significantly between a supplier experienced in Tier-1 programs and a factory new to regulated production.

In industrial ESG and infrastructure projects, standards can be interpreted differently around water treatment durability, corrosion testing, lifecycle maintenance, and environmental monitoring documentation. In smart agri-tech, sensor reliability, enclosure ratings, and field-service intervals often depend on how the supplier converts written requirements into actual operating assumptions across humidity, vibration, and dust exposure conditions.

Typical root causes

- Different internal quality systems, from basic document control to fully integrated traceability across 3 to 5 production stages.

- Uneven engineering depth, especially when suppliers can manufacture to print but cannot challenge ambiguous specifications early.

- Commercial pressure that encourages quoting before process risk, tooling life, or validation scope has been fully clarified.

- Cross-sector misunderstanding, such as applying consumer electronics assumptions to automotive, agri-tech, or infrastructure-grade hardware.

The table below shows how identical standards can still produce different operational outcomes when supplier interpretation is not normalized through benchmarking.

The key lesson is simple: a standard is a shared reference point, not a guarantee of identical execution. Companies that buy complex hardware, tooling, or infrastructure components need a second layer of control that compares how suppliers interpret and operationalize the same requirement set.

How Interpretation Gaps Create Cost, Quality, and Compliance Risk

When standards are read differently, the first visible effect is usually not a catastrophic failure. It is a gradual drift. A production line starts with acceptable pilot samples, then Cpk declines below 1.33 on a critical feature, preventive maintenance stretches from every 50,000 cycles to 80,000 cycles, or incoming inspection begins to catch cosmetic and dimensional deviations more often than forecast. Small deviations compound into expensive operational noise.

For business evaluators and finance approvers, the hidden cost is especially important. A low unit price can be offset by added inspection labor, expedited freight, line stoppages, warranty reserves, or repeated engineering changes. In many programs, a 3% purchase-price reduction can disappear quickly if defect sorting adds 2 operators per shift for 4 weeks, or if launch delays push back customer revenue milestones by 10 to 15 business days.

Risk by stakeholder group

Operators and users experience instability first: inconsistent fit, recurring alarms, maintenance interruptions, or reduced throughput. Technical evaluators face unclear root causes because the documented standard looks correct while actual process discipline differs. Project managers absorb schedule pressure when qualification loops repeat. Quality and safety teams carry the burden of containment, audit response, and traceability review. Decision-makers then confront delayed ROI, supplier disputes, and damaged customer confidence.

Across integrated supply chains, these risks become more serious when products move between sectors. An EV component may include electronics, molded parts, machined fixtures, and environmental sealing requirements in one assembly. A smart agriculture platform may combine sensors, connectivity modules, housings, and field-mounted hardware that must operate reliably for 12 to 36 months in variable outdoor conditions. A standard-by-standard review is not enough unless the interfaces between those elements are benchmarked together.

Common risk signals before major failure

- First article approval passes, but recurring deviations appear within the first 3 production lots.

- Supplier PPAP or qualification files are complete, yet process control plans lack clear reaction thresholds.

- Test records exist, but sample size, duration, or environmental conditions are not aligned across vendors.

- Engineering change requests increase after tooling release, often by 2 to 4 iterations, due to unclear original assumptions.

The following table helps procurement and cross-functional teams identify how a standards interpretation gap typically translates into business exposure.

A standards gap becomes expensive when it is discovered late. The earlier companies compare execution assumptions, the lower the cost of correction. That makes technical benchmarking a sourcing control, not just an engineering exercise.

What Clear Benchmarking Adds Beyond Engineering Standards

Benchmarking does not replace standards. It makes standards operational. The purpose is to convert broad compliance language into comparable supplier evidence across process capability, validation depth, equipment control, traceability, and maintenance discipline. Instead of asking whether a supplier is “aligned,” benchmarking asks how that alignment is executed at the factory floor, sub-tier level, and service stage.

For a multi-disciplinary environment like modern manufacturing, that matters because performance is interconnected. A molded enclosure affects ingress protection. A PCB assembly affects thermal stability. A filtration module affects maintenance burden and environmental compliance. A benchmarking framework allows procurement, engineering, quality, and management teams to compare suppliers using the same 4 to 6 decision dimensions rather than isolated declarations.

Core benchmarking dimensions

A strong benchmarking model typically measures technical consistency, production control, documentation rigor, lifecycle risk, and scalability. In many industrial categories, the difference between an adequate supplier and a reliable long-term supplier is visible in reaction plans, calibration control, validation repeatability, and change-management discipline rather than in the initial quotation.

- Specification translation: Can the supplier turn design intent into measurable controls, test limits, and operator instructions within 5 to 10 working days?

- Process capability: Are critical features monitored with clear Cp/Cpk targets, and are special characteristics treated differently from cosmetic ones?

- Traceability: Can material lots, machine parameters, inspection records, and rework history be linked at batch or serial level?

- Lifecycle control: Is there a defined maintenance interval, mold life assumption, or service cycle based on actual operating conditions?

- Change governance: Are material, sub-tier, software, or tooling changes reviewed formally before implementation?

Why GIM’s cross-sector view matters

GIM is valuable because it connects signals across semiconductor and electronics, automotive and mobility, smart agri-tech, industrial ESG and infrastructure, and precision tooling. That cross-sector transparency helps teams identify when a supplier is technically strong in one domain but underprepared for another. A factory experienced in consumer electronics may still need tighter process discipline for automotive-grade reliability, while an infrastructure supplier may need better digital traceability to support global sourcing requirements.

This broader perspective supports better investment decisions. It gives financial approvers a more realistic view of total risk, helps engineers compare like-for-like process maturity, and enables project owners to reduce surprises during qualification, ramp-up, and after-sales support.

A Practical Evaluation Framework for Procurement, Engineering, and Quality Teams

To reduce misinterpretation, companies need a repeatable evaluation model before supplier nomination and again before mass production. The most effective approach is a staged review that combines document analysis, technical benchmarking, factory process verification, and launch readiness control. In many B2B programs, this can be completed in 4 stages over 2 to 6 weeks depending on product complexity and the number of suppliers involved.

Four-stage evaluation sequence

- Requirement normalization: Convert drawings, standards, and customer notes into a shared checklist of critical-to-quality, critical-to-function, and critical-to-compliance items.

- Supplier interpretation review: Ask each vendor to state assumptions on materials, tooling, inspection, validation duration, maintenance cycle, and change control.

- Benchmark scoring: Compare suppliers on technical, operational, and commercial dimensions using weighted criteria such as 30% quality control, 25% process capability, 20% traceability, 15% delivery resilience, and 10% lifecycle service.

- Pilot and ramp control: Confirm that trial builds, first article approval, and early production lots use the same controls proposed during quoting and qualification.

The table below provides a practical scoring model that can be adapted for PCBA, tooling, industrial equipment, or infrastructure components.

For distributors, agents, and channel partners, this structure is also useful in pre-sales. It creates a factual basis for recommending one supplier or solution over another without relying only on price or claimed certification status.

Questions buyers should ask before approval

- Which 5 to 10 requirements are considered critical, and how are they monitored in production?

- What is the expected validation cycle: 3 days, 2 weeks, or a full seasonal or environmental exposure window?

- How often are key tools, fixtures, or sensors calibrated and maintained?

- What changes require customer notification, and what changes can occur internally without escalation?

These questions move the conversation from basic compliance to actionable execution. That shift is where risk reduction begins.

Implementation Tips, Common Mistakes, and Cross-Industry FAQ

Even strong organizations make avoidable mistakes when relying too heavily on engineering standards alone. A frequent error is assuming that approved samples prove process readiness. Another is comparing suppliers on unit cost while leaving validation scope, maintenance expectations, or sub-tier control undefined. In regulated or high-performance sectors, these omissions often create more cost after award than during sourcing.

Implementation tips that improve consistency

- Use a single interpretation checklist across engineering, sourcing, and quality so that everyone reviews the same assumptions.

- Require suppliers to document not only what standard applies, but how it is converted into work instruction, inspection point, and reaction plan.

- Review the first 3 production lots or the first 30 days of supply with tighter tracking for defect trends, change events, and delivery stability.

- For tooling and long-life industrial assets, define maintenance and refurbishment triggers early, such as every 100,000 cycles or after a specified wear threshold.

Teams should also remember that not every gap is a supplier weakness. Sometimes the customer specification is incomplete, contradictory, or copied from another sector without proper adaptation. Good benchmarking identifies these mismatches before they become commercial conflict.

FAQ: How should buyers handle standards interpretation risk?

How do we know if a supplier truly understands our standard requirements?

Ask for a written interpretation matrix. It should map each critical requirement to process control, test method, sample size, frequency, and reaction plan. If a supplier can do this within 5 to 7 working days with minimal clarification loops, that is a strong indicator of real technical understanding.

Is certification enough for supplier approval?

No. Certification confirms a framework, not the exact quality of execution for your product category. Two certified suppliers can still differ significantly in traceability depth, capability on special characteristics, and discipline in engineering change control. Certification should be one input, not the final decision.

What delivery timeline should we expect for proper benchmarking?

For many projects, a focused benchmark review can be completed in 2 to 4 weeks. Complex programs involving electronics, tooling, and infrastructure interfaces may require 4 to 8 weeks if factory verification, pilot builds, or multi-site comparisons are included.

Which teams benefit most from a benchmarking platform like GIM?

The biggest gains usually appear when engineering, procurement, quality, project management, and executive stakeholders use the same comparison logic. That alignment shortens approval cycles, improves budget accuracy, and reduces debate based on incomplete or non-comparable supplier claims.

Engineering standards remain essential, but in global manufacturing they are only the starting point. Real supply chain resilience depends on how those standards are interpreted, verified, and managed across electronics, mobility, agri-tech, industrial ESG, infrastructure, and precision tooling. If your team needs clearer supplier comparisons, lower qualification risk, and stronger cross-sector visibility, GIM can help translate written standards into measurable decision intelligence. Contact us to discuss your sourcing challenges, request a tailored benchmarking framework, or explore more solutions for complex industrial programs.

- electronics

- tech hardware

- PCBA manufacturing

- Mobility

- sensors

- Agri-Tech

- crop monitoring

- Industrial ESG

- industrial sustainability

- industrial filtration

- tooling solutions

- injection molding

- plastic injection mold factory

- Precision tooling

- Technical benchmarking

- Smart Agri-Tech

- Global manufacturing

- Modern manufacturing

- Engineering standards

- Industrial infrastructure

- Sustainable agriculture

- Environmental infrastructure

The Archive Newsletter

Critical industrial intelligence delivered every Tuesday. Peer-reviewed summaries of the week's most impactful logistics and market shifts.