Monday, May 22, 2024

When Cross-Sector Data Helps and When It Misleads

by

Published

Views:

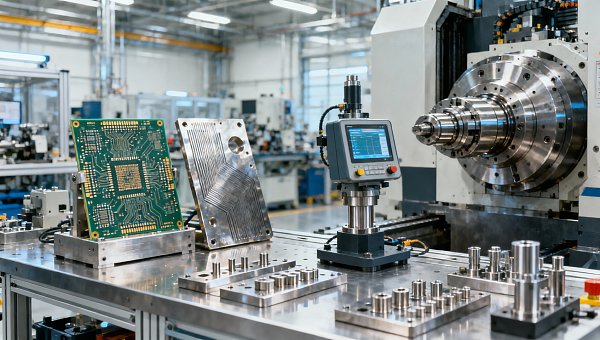

Cross-sector data can help Industrial strategists and Tier-1 engineers uncover patterns that improve Industrial transparency, strengthen Mechanical foundations, and support smarter Infrastructure benchmarking. But when context is weak, the same data can mislead decisions on HDI substrates, high-speed machining spindle speed, material fatigue in hardware, and metal hardness testing (rockwell). Understanding where comparison adds value—and where it distorts reality—is essential for accurate industrial analysis.

Why cross-sector benchmarking matters in modern manufacturing

Industrial teams no longer work inside clean category boundaries. A procurement manager comparing insulation performance in electronics may also need to understand thermal loading in EV assemblies, contamination control in water treatment modules, or wear behavior in precision tooling. In practice, cross-sector data helps decision-makers recognize common engineering patterns across 3 layers: material behavior, process capability, and compliance risk.

This is especially relevant when product cycles shorten to 6–18 months, qualification windows compress into 2–8 weeks, and supply chain disruptions force faster substitutes. Looking only at one sector often hides transferable lessons. Looking at too many sectors without a filter creates noise. The useful middle ground is structured benchmarking with standard references such as ISO, IATF, and IPC where applicable.

Global Industrial Matrix (GIM) addresses this problem by synchronizing intelligence across Semiconductor & Electronics, Automotive & Mobility, Smart Agri-Tech, Industrial ESG & Infrastructure, and Precision Tooling. For researchers and operators, that means one comparison framework can track how mechanical loads, thermal profiles, tolerance bands, and lifecycle demands change from one environment to another instead of treating each dataset as isolated.

The value is not in collecting more numbers. The value is in comparing the right numbers under the right operating assumptions. A spindle speed range that looks efficient in one machining context may be unstable in another because tool material, coolant strategy, and duty cycle differ. The same logic applies to HDI substrates, filtration modules, tractor electronics, and structural metal parts.

What useful cross-sector data usually includes

- Comparable operating conditions, such as temperature range, vibration exposure, humidity band, duty cycle, and maintenance interval.

- Traceable performance variables, including tolerance window, wear rate trend, hardness scale, conductivity, pressure drop, or electrical integrity metrics.

- Reference standards that define how results were measured, for example ISO process rules, IATF quality discipline, or IPC design and fabrication criteria.

- Decision context covering batch size, qualification stage, supplier maturity, and field-use environment rather than lab-only conditions.

Where information researchers and operators benefit most

Information researchers need signal clarity. Operators need usable thresholds. When both groups access benchmarked cross-sector data, they can identify whether a specification is broadly robust or only narrowly valid. That distinction can save 1–2 sourcing cycles and reduce re-validation work when a supplier change or material substitution becomes necessary.

For example, a hardness result on the Rockwell scale may support incoming quality checks, but it should not be used alone to predict fatigue life under cyclic loading. A good benchmark platform connects hardness testing with geometry, heat treatment route, loading mode, and expected service duration. That is where multi-disciplinary intelligence becomes operational, not theoretical.

When cross-sector data helps and when it starts to mislead

Cross-sector data helps when the comparison target shares a functional mechanism. It misleads when people compare labels instead of engineering realities. Similar words like “durability,” “efficiency,” or “precision” often hide different test methods, different acceptable failure modes, and different field conditions. A reliable analysis starts by asking whether the compared systems fail for the same reasons and operate within similar stress envelopes.

Take HDI substrates and automotive power electronics. Both involve thermal management, signal integrity, and reliability concerns. However, stack-up design rules, copper distribution, vibration loads, and service temperature exposure can differ substantially. A material choice that appears acceptable in a controlled electronics enclosure may not perform the same way near an EV drivetrain where thermal cycling and shock loads are more severe.

The same caution applies to high-speed machining spindle speed. A spindle running at 12,000–24,000 rpm may be appropriate for one alloy and cutter geometry, but using that speed range as a generic benchmark across aerospace-grade metals, agricultural components, and infrastructure hardware can produce tool chatter, excess heat, or premature wear. Feed rate, radial engagement, lubrication, and machine rigidity matter as much as the speed number itself.

Material fatigue is another area where cross-sector comparisons are useful but risky. Looking at fatigue lessons from automotive suspension parts can help teams assessing industrial supports or mobile agri-equipment. Still, if surface finish, load frequency, corrosion exposure, or weld geometry differs, the comparison can become misleading. One incomplete benchmark can distort an entire procurement decision.

A practical comparison framework

Before using any benchmark, separate the comparison into 4 checkpoints: operating environment, failure mechanism, test method, and economic consequence. If one of these checkpoints is missing, treat the conclusion as directional rather than decisive. This approach is valuable for procurement officers, process engineers, and operators who must act quickly but cannot afford hidden mismatch risk.

The table below shows where cross-sector data usually supports confident decisions and where it often introduces error.

The key insight is simple: transferable data is never context-free. GIM’s cross-sector approach is most effective when teams compare mechanisms, not marketing labels. That helps users filter reusable intelligence from attractive but low-reliability analogies.

Three warning signs that a benchmark is being overused

- The source lists a result but not the test condition, sample preparation, or standard reference.

- A single metric is treated as a full performance proxy, such as hardness standing in for fatigue or speed standing in for productivity.

- The operating environment changes from indoor controlled service to outdoor, corrosive, or shock-loaded service without adjustment.

How to evaluate cross-sector data for procurement and operational use

For buyers, the question is not whether data is available. The question is whether it is decision-grade. For operators, the concern is similar: can the benchmark support setup, inspection, maintenance, or replacement timing? A practical evaluation model uses 5 procurement dimensions: specification relevance, test comparability, supplier traceability, qualification timeline, and total operational risk.

This matters across all industries because sourcing teams often compare alternatives under time pressure. A substitute material might arrive in 7–15 days instead of 4–6 weeks, but if its fatigue behavior, plating compatibility, or dimensional stability is unclear, the short lead time can create a much longer failure cost later. Fast sourcing only works when benchmark confidence is explicit.

GIM supports this by translating benchmark data into cross-functional procurement logic. That means a researcher can screen suppliers and technical claims, while an operator can verify what the data means for actual use: startup conditions, load variation, maintenance intervals, and inspection checkpoints. A benchmark becomes valuable when it changes action, not just reporting.

The evaluation process also benefits from staged qualification. In many industrial settings, a 3-stage approach is more realistic than immediate full approval: desktop screening, sample-based technical review, and controlled deployment. This reduces the risk of overcommitting to data that looks attractive on paper but lacks field correlation.

A workable 4-step assessment flow

- Define the use case. Specify temperature band, duty cycle, maintenance expectation, compliance needs, and acceptable deviation range.

- Check benchmark alignment. Confirm whether the compared sectors share failure modes, measurement methods, and installation realities.

- Review supply-side constraints. Include lead time, lot consistency, documentation depth, and change-control discipline.

- Run bounded validation. Use trial lots, pilot lines, or segmented deployment before scaling across multiple plants or product lines.

The following table can be used as a procurement checklist when cross-sector benchmark data influences material, process, or component selection.

A checklist like this helps both researchers and operators move from broad intelligence to practical selection. It also creates a common language between sourcing, engineering, and plant-level teams, which is often the missing link in multi-industry procurement programs.

What to ask before approving a benchmark-based substitute

Ask whether the alternate part or process has matching stress conditions, equivalent inspection methods, and a realistic lead-time advantage after qualification is counted. If a substitute saves 10 days in shipping but adds 3 weeks of internal validation, the economic gain may disappear. Good cross-sector intelligence includes both technical and operational timing.

Also ask who will use the result. A research analyst may need category-level comparability. An operator needs threshold-level clarity: acceptable hardness band, setup window, service interval, or warning criteria. GIM’s multi-disciplinary structure is useful because it bridges these different decision depths instead of forcing one summary level on every user.

Standards, context controls, and the metrics that should not stand alone

Cross-sector comparisons become much more reliable when tied to standard frameworks. ISO can help normalize process and measurement discipline. IATF is relevant where automotive-grade quality expectations influence supplier control and traceability. IPC provides important reference points for electronics and interconnect structures such as HDI substrates. Standards do not eliminate judgment, but they reduce ambiguity around how data was produced.

Even with standards, some metrics should never stand alone. Rockwell hardness is useful, but it is not a complete design-life indicator. Spindle speed is useful, but it is not a productivity guarantee. A low pressure drop in a filtration component is useful, but it is not enough without fouling behavior, cleaning cycle assumptions, and fluid quality context. The same principle applies across environmental infrastructure, mobility, smart agriculture, and precision tooling.

For operators, this means setup sheets and incoming inspection plans should include at least 3 linked categories: measured value, operating condition, and action threshold. For researchers, it means supplier benchmarking should compare documentation depth and method consistency, not just advertised performance. These controls reduce the chance that a cross-sector analogy becomes a hidden source of downstream cost.

A practical way to improve data quality is to assign every benchmark one of 3 confidence levels: transferable, conditional, or non-transferable. Transferable data shares both mechanism and test basis. Conditional data needs adjustment for environment or duty cycle. Non-transferable data may still be informative, but it should not drive sourcing or process change without fresh validation.

Metrics that require companion data

- Hardness values should be paired with heat treatment route, microstructure, and expected cyclic load.

- Spindle speed should be paired with feed rate, tool material, depth of cut, and coolant strategy.

- Substrate reliability should be paired with stack-up details, thermal cycling profile, and assembly constraints.

- Infrastructure component performance should be paired with environmental exposure, maintenance interval, and contamination profile.

A useful rule for mixed-industry teams

If the benchmark cannot answer “under what condition,” “measured how,” and “what changes in operation,” it is not ready for final selection. This rule is easy to apply and often prevents the most expensive category of error: technically true data used in the wrong decision context.

Common misconceptions and FAQ for researchers and operators

Many teams assume that more data automatically means better judgment. In reality, more unmanaged data often increases comparison error. A focused question set, a clear standard basis, and a short list of operationally relevant metrics usually outperform a large but weakly structured dataset. This is especially true when multiple sectors, suppliers, and engineering disciplines intersect in one decision path.

Another common misconception is that cross-sector data is only strategic. It is also highly practical for operators who need to set inspection frequencies, define replacement windows, or understand why a substitute behaves differently during startup, continuous use, or shutdown. Good benchmarking reduces friction between technical intent and shop-floor reality.

The questions below reflect common search intent from buyers, researchers, and end users who need usable industrial transparency rather than generic commentary.

How do I know whether a cross-sector comparison is valid?

Check 4 items first: matching failure mechanism, similar operating environment, comparable test method, and realistic economic impact. If 1 or 2 of these are missing, use the benchmark only for screening. If 3–4 align, it can support selection and qualification planning. This method works well for components, materials, and process windows across mixed industrial categories.

What should buyers focus on when using cross-sector benchmark data?

Focus on 5 decision points: specification match, documentation quality, qualification time, supply continuity, and operational consequences. Buyers often overvalue unit price and undervalue revalidation effort. In many projects, a lower-price option loses its advantage if it adds even 2–3 extra approval steps or increases inspection frequency after launch.

Can hardness testing replace fatigue or lifecycle validation?

No. Hardness testing is useful for consistency checks and process verification, but fatigue depends on stress concentration, surface condition, geometry, residual stress, corrosion exposure, and loading pattern. Hardness can support a screening decision, yet lifecycle confidence usually requires broader analysis and, in critical cases, targeted testing under representative conditions.

How long does benchmark-based qualification usually take?

The answer varies by product criticality, but a common industrial sequence is 1–2 weeks for data screening, 2–4 weeks for sample review or trial confirmation, and additional time if field validation is required. Highly regulated or safety-sensitive applications can take longer. The important point is to include validation time when comparing “fast” alternatives.

Why work with GIM when cross-sector data must support real decisions

GIM is built for organizations that cannot afford siloed interpretation. By connecting Semiconductor & Electronics, Automotive & Mobility, Smart Agri-Tech, Industrial ESG & Infrastructure, and Precision Tooling, GIM helps teams compare systems through a shared technical logic rather than disconnected category labels. That is critical when one sourcing decision affects performance, compliance, maintenance, and supply resilience at the same time.

For information researchers, GIM helps validate whether a benchmark is transferable, conditional, or non-transferable. For operators, GIM helps translate data into setup ranges, inspection priorities, and practical risk controls. This combination supports stronger industrial transparency across mechanical, digital, and ecological foundations without turning comparison into oversimplification.

If you are comparing HDI substrates, evaluating machining windows, reviewing hardness and fatigue implications, or benchmarking infrastructure hardware across sectors, the most useful next step is a structured discussion around context. That includes 6 common consultation topics: parameter confirmation, product selection, lead-time review, custom benchmark mapping, certification requirements, and sample support planning.

Contact GIM when you need cross-sector intelligence that can stand up to procurement review, engineering scrutiny, and operational use. You can request support for benchmark interpretation, RFQ preparation, supplier comparison, qualification sequencing, standards alignment, or quotation discussions tied to actual deployment conditions rather than abstract metrics.

What you can discuss with us

- Whether a cross-sector dataset is suitable for direct selection, conditional screening, or further validation.

- How to compare technical parameters across electronics, mobility, agri-tech, infrastructure, and precision tooling without losing context.

- Which standards and documentation checkpoints to request before supplier approval or substitution.

- How to align sample review, delivery expectations, and operational acceptance criteria into one practical decision path.

The Archive Newsletter

Critical industrial intelligence delivered every Tuesday. Peer-reviewed summaries of the week's most impactful logistics and market shifts.