Monday, May 22, 2024

Infrastructure Benchmarking Looks Useful Until Baselines Shift

by

Published

Views:

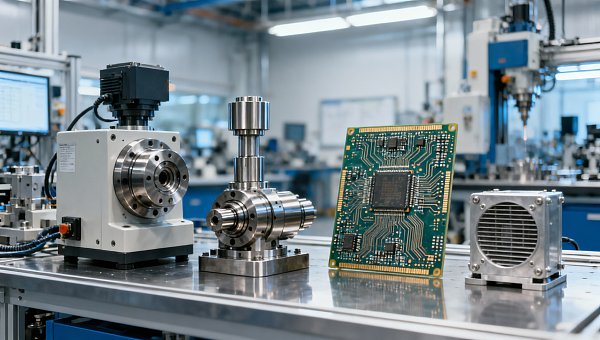

Infrastructure benchmarking appears essential for Industrial strategists and Tier-1 engineers seeking industrial transparency across complex supply chains. Yet when baselines shift, cross-sector data can distort decisions on mechanical foundations, from HDI substrates and high-speed machining spindle speed to material fatigue in hardware and metal hardness testing (Rockwell), turning useful comparisons into hidden operational risk.

Why infrastructure benchmarking becomes risky when the baseline is unstable

Benchmarking works only when comparison conditions stay consistent. In industrial infrastructure, that condition is often missing. A plant may compare filtration modules, tooling life, PCB substrate durability, motor efficiency, or environmental control systems across suppliers, yet the test loads, ambient ranges, duty cycles, and compliance assumptions differ. Once the baseline moves, the benchmark stops being a decision tool and starts becoming a source of procurement error.

This problem affects more than one sector. Electronics teams may review HDI substrate stability under one thermal profile, while automotive engineers assess powertrain hardware under another. Smart agri-tech operators may focus on outdoor dust, vibration, and moisture over 12–18 month use cycles, whereas ESG infrastructure teams may prioritize membrane fouling rates and pump energy draw over quarterly reporting intervals. The numbers look comparable, but the operating assumptions are not.

For information researchers and operators, the pain point is practical: reports arrive fast, but comparability is weak. A purchasing team may be asked to shortlist 3 suppliers in 7–10 days. Operators need parts that survive real conditions, not only favorable lab conditions. When baseline definitions are unclear, low apparent cost can conceal higher maintenance frequency, shortened replacement intervals, or quality drift across batches.

Global Industrial Matrix (GIM) addresses this by aligning benchmark interpretation across five connected pillars: Semiconductor & Electronics, Automotive & Mobility, Smart Agri-Tech, Industrial ESG & Infrastructure, and Precision Tooling. Instead of treating each data point in isolation, GIM helps decision-makers trace how testing standards, operating context, and engineering assumptions influence the result. That cross-sector view is critical when component performance in one domain affects risk in another.

Typical signs that a benchmark baseline has shifted

- Test temperatures, humidity ranges, or duty cycles differ, even if the same component category is being compared.

- One supplier reports nominal values, while another reports values after endurance testing of 500–1,000 hours.

- Hardness, fatigue, throughput, or energy data are measured under different load assumptions or sample sizes.

- Certification references such as ISO, IATF, or IPC are cited, but the benchmark does not specify which clause or test method guided the result.

What should procurement and operations teams compare first?

The first step is not comparing headline performance. It is comparing the basis of measurement. Before price, teams should identify 4 core benchmark anchors: operating environment, load profile, measurement method, and compliance context. This applies equally to spindle systems, EV subassemblies, water treatment modules, connector materials, and precision tooling. Without those anchors, a technical benchmark is only partially usable.

A structured review also helps non-specialist buyers communicate with operators. Procurement often works on RFQ timelines of 2–4 weeks, while the end user worries about uptime, tool wear, contamination, vibration, or thermal drift over the next 6–12 months. Benchmarking should bridge these priorities. If it fails to connect commercial comparison with operational reality, the sourcing cycle becomes slower and requalification costs rise.

The table below shows how baseline instability changes interpretation across common industrial categories. It is designed for cross-functional teams that need to review technical data, supplier claims, and plant-level usability in one place.

The pattern is consistent: the more complex the asset, the more dangerous it is to compare isolated numbers. Stable baselines support cleaner sourcing decisions, while shifted baselines inflate uncertainty around service intervals, spare inventory, and lifetime cost. This is why many industrial teams now ask not only “what is the result?” but also “under what reference conditions was the result produced?”

A practical 4-step benchmark review before RFQ release

- Define the real operating window, such as temperature range, vibration exposure, contamination level, and daily run hours.

- Check whether data came from nominal, peak, or sustained conditions and whether the sample size was representative.

- Map benchmark metrics to the relevant standards framework, including ISO, IATF, IPC, or sector-specific internal protocols.

- Ask operators to validate whether the benchmark reflects maintenance reality, replacement cycles, and start-up conditions.

How cross-sector benchmarking should be interpreted in real industrial scenarios

Cross-sector benchmarking is valuable because modern manufacturing systems are interconnected. A substrate decision can affect downstream assembly yield. A tooling decision can influence dimensional stability in automotive components. An environmental infrastructure choice can alter plant utility cost and compliance readiness. The issue is not whether cross-sector comparison is useful. It is whether the comparison captures the transfer conditions between sectors.

Take a multi-site manufacturer evaluating equipment and materials in 3 regions. One site may prioritize throughput, another contamination control, and a third water reuse performance. If each region uses different reference periods, maintenance methods, and acceptance thresholds, the enterprise dashboard may suggest consistency where there is none. GIM reduces this problem by synchronizing benchmark interpretation rather than simply aggregating supplier data.

Operators especially benefit from this approach because they work with failure modes, not abstractions. Material fatigue, spindle heat buildup, membrane fouling, connector wear, and coating breakdown all emerge over time. A benchmark captured in a narrow test window may miss these real-life transitions. For assets expected to run continuously or in repeated duty cycles across 8–16 hour shifts, baseline quality matters as much as component price.

The next table helps teams translate benchmark data into application-specific checks. It is useful during specification drafting, supplier screening, and plant acceptance planning.

These scenarios show why infrastructure benchmarking must be interpreted as a system question, not a line-item question. GIM’s value lies in connecting material, mechanical, digital, and environmental context so that benchmark data can support plant decisions, supplier qualification, and long-range sourcing with fewer blind spots.

Where baseline drift usually enters the process

During supplier data collection

Suppliers may present best-case values from different reporting windows, sometimes 24-hour tests, sometimes 30-day averages. Unless the requesting team defines the benchmark frame in advance, comparison quality declines quickly.

During internal consolidation

Engineering, procurement, and operations may each weight different indicators. One team ranks CAPEX first, another prioritizes durability, while another focuses on compliance documentation. Without a shared baseline model, cross-functional review turns inconsistent.

Which standards and compliance checks reduce benchmark distortion?

Standards do not eliminate benchmarking risk, but they narrow ambiguity. In multi-industry environments, ISO frameworks help normalize management and test discipline, IATF supports automotive quality rigor, and IPC is relevant where electronics interconnect quality matters. The important point is not simply naming a standard. It is confirming that the benchmarked attribute was measured under a method that is traceable, documented, and suitable for the intended application.

For procurement teams, a useful compliance review usually covers 5 checks: the applicable standard family, the test condition summary, the acceptance threshold, the batch or sample definition, and the reporting period. This level of detail prevents a common mistake: approving a technically compliant supplier whose benchmark data still does not fit the real plant environment.

For operators, standards matter because they influence maintenance predictability. A component may satisfy a specification at receipt but still underperform after repeated thermal cycling, abrasive exposure, or chemical cleaning. That is why benchmark review should extend beyond first-pass compliance. In many industrial settings, lifecycle behavior over 3, 6, or 12 months is more decision-relevant than initial performance on day one.

GIM helps teams link standards language with practical asset behavior. That translation is especially useful when one organization sources across electronics, mobility, agriculture, infrastructure, and tooling at the same time. The wider the sourcing footprint, the greater the need for a common benchmark interpretation framework.

A compliance-oriented benchmark checklist

- Confirm whether the reported result reflects incoming inspection, controlled laboratory testing, or field operation under routine maintenance.

- Verify whether hardness, fatigue, cleanliness, electrical integrity, or throughput values were measured before or after endurance exposure.

- Match the benchmark method to the application risk, especially when failure affects safety, quality escape, or environmental compliance.

- Request clarification if the same supplier uses different data windows for design qualification and commercial quoting.

Common misconceptions, operational risks, and FAQ

A frequent misconception is that more benchmark data automatically means better visibility. In reality, more data can amplify error if baseline logic is inconsistent. Another misconception is that a recognized standard reference makes all supplier data directly comparable. It does not. Standards create a framework, but decision quality still depends on test method discipline, application fit, and reporting transparency.

A second risk appears when teams benchmark only upstream inputs and ignore downstream consequences. For example, a small variation in substrate reliability, hardness profile, or filtration stability may create larger effects later in assembly yield, maintenance hours, or compliance reporting. Benchmarking should therefore follow the chain from component behavior to system outcome.

The final risk is timing. Under delivery pressure, teams may compress technical comparison into a few days and skip operator review. That decision often looks efficient in the first 2 weeks, then becomes expensive in the next 2 quarters. A disciplined benchmark review does not need to be slow, but it does need a clear baseline and a defined approval path.

The questions below reflect common search intent from industrial researchers and end users who need practical guidance rather than abstract benchmarking theory.

How do I know whether two infrastructure benchmarks are truly comparable?

Check at least 4 items: operating conditions, test duration, measurement method, and acceptance threshold. If even one differs materially, the comparison may still be informative, but it should not be treated as direct equivalence. This is especially important for fatigue, sustained output, filtration stability, and hardness-related wear performance.

Which teams should be involved before making a benchmark-based sourcing decision?

At minimum, involve procurement, the responsible engineer, and the operator or maintenance owner. In higher-risk categories, quality and compliance should also review. A 3-to-5 person review loop is often enough to catch baseline mismatch before RFQ finalization or supplier nomination.

What is the usual time frame for a useful benchmark review?

For repeat categories with defined templates, an initial review may take 5–7 working days. For new categories spanning multiple sectors or standards, 2–4 weeks is more realistic. The goal is not delay. The goal is to avoid making a 12-month sourcing commitment based on a 1-page comparison with unstable assumptions.

Can cross-sector data still be useful if the baseline is not identical?

Yes, but it should be used directionally, not as direct proof. Cross-sector data is valuable for identifying patterns, likely risk areas, and alternative design paths. It becomes procurement-grade only after the baseline gaps are documented and normalized for the intended application.

Why work with GIM when benchmark decisions affect procurement, operations, and risk?

When baselines shift, industrial teams do not just need more data. They need interpretable, cross-sector benchmark intelligence that connects hardware performance, standards language, and operational use. GIM is built for that need. By synchronizing insights across Semiconductor & Electronics, Automotive & Mobility, Smart Agri-Tech, Industrial ESG & Infrastructure, and Precision Tooling, GIM helps teams compare unlike datasets with greater discipline and fewer hidden assumptions.

This matters for both researchers and operators. Researchers need a clearer path from supplier claim to sourcing recommendation. Operators need confidence that the selected solution will hold up under actual loads, environmental conditions, and maintenance cycles. GIM supports both by framing benchmarks around verifiable reference conditions and internationally recognized standards rather than isolated headline numbers.

If you are reviewing infrastructure benchmarking for filtration modules, EV-related hardware, HDI substrates, machining systems, materials, or cross-site industrial assets, you can consult GIM on specific decision points. Typical discussion areas include parameter confirmation, baseline alignment, supplier comparison logic, delivery cycle expectations, certification relevance, sample evaluation criteria, and customization needs for different operating environments.

A productive inquiry usually starts with 3 items: your application scenario, the metrics you are comparing, and the standards or reporting methods already referenced in your data set. From there, GIM can help refine selection criteria, identify hidden benchmark drift, and support quotation or qualification discussions with a more reliable technical basis.

- Request support for parameter confirmation when supplier metrics seem inconsistent or incomplete.

- Ask for selection guidance when comparing alternatives across performance, lifecycle, and compliance priorities.

- Discuss delivery timelines, sample support, and qualification checkpoints before moving into RFQ or pilot phases.

- Use GIM’s cross-sector perspective to reduce hidden risk where electronics, mobility, agri-tech, infrastructure, and tooling data intersect.

The Archive Newsletter

Critical industrial intelligence delivered every Tuesday. Peer-reviewed summaries of the week's most impactful logistics and market shifts.